Open Research Toolkit

2026 | UI design, design systems, field research, prototyping, development

How do you give researchers data-processing superpowers without turning them into developers? Open Research Toolkit (ORT) is a component library designed to be assembled by LLM agents into private, local-first research interfaces. The core bet: if components are built to be programmatically wired together, an AI agent can compose a research tool faster than a human can learn the CLI alternatives.

Project introduction

How do you give researchers data-processing superpowers without turning them into developers? Open Research Toolkit (ORT) is a component library designed to be assembled by LLM agents into private, local-first research interfaces. The core bet: if components are built to be programmatically wired together, an AI agent can compose a research tool faster than a human can learn the CLI alternatives.

The project came directly from fieldwork. Working alongside social scientists and anthropologists at Sciences Po médialab, the pattern was consistent: researchers had data — interview transcripts, survey results, field notes, media archives — and they had questions. What they lacked was a bridge between the two that didn't require opening a terminal. The toolkit exists because that bridge shouldn't be a person. It should be a system an LLM can assemble in conversation with a researcher, using components that already understand the data formats, privacy constraints, and processing tasks common in qualitative social research.

A design system for researchers

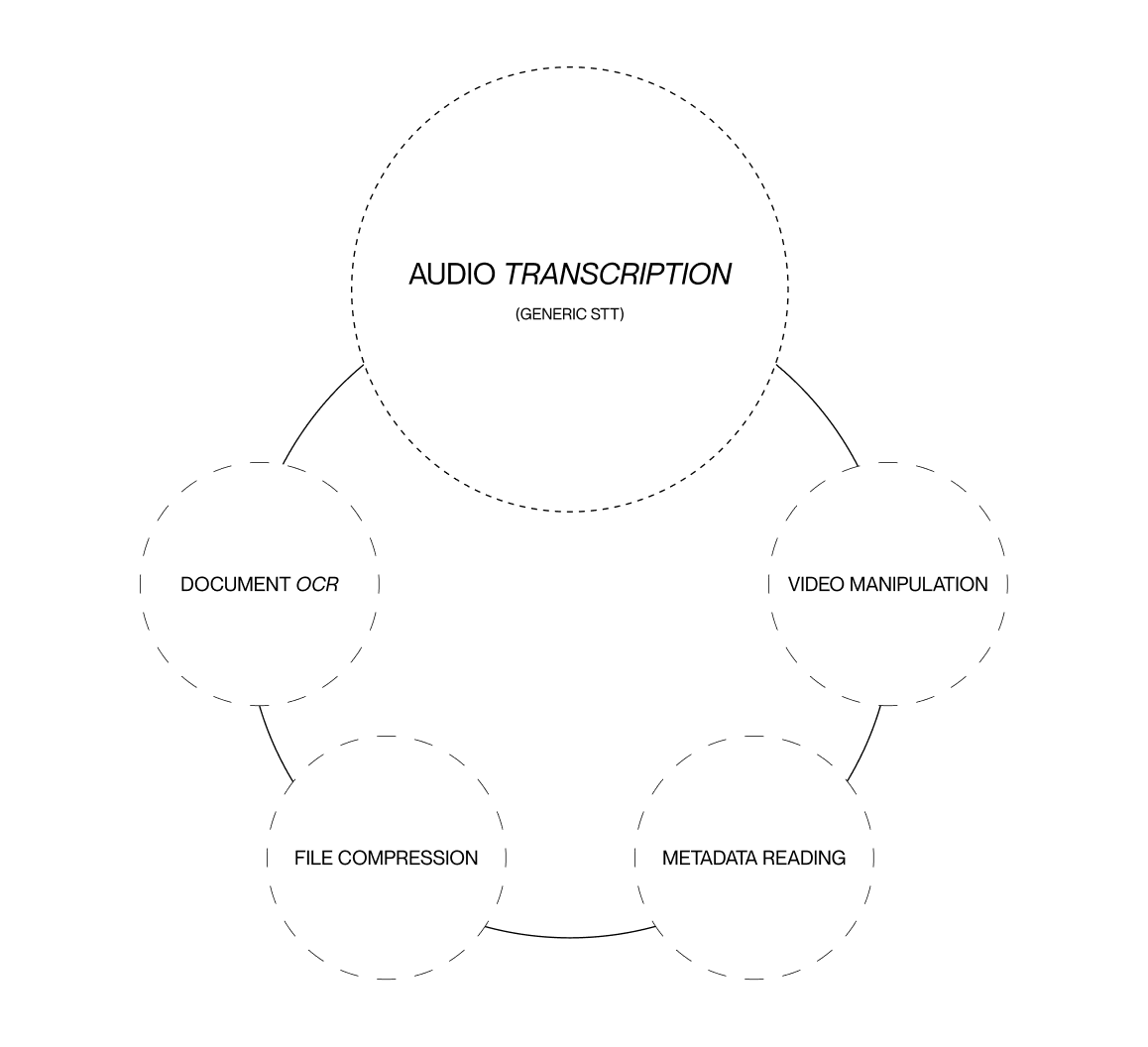

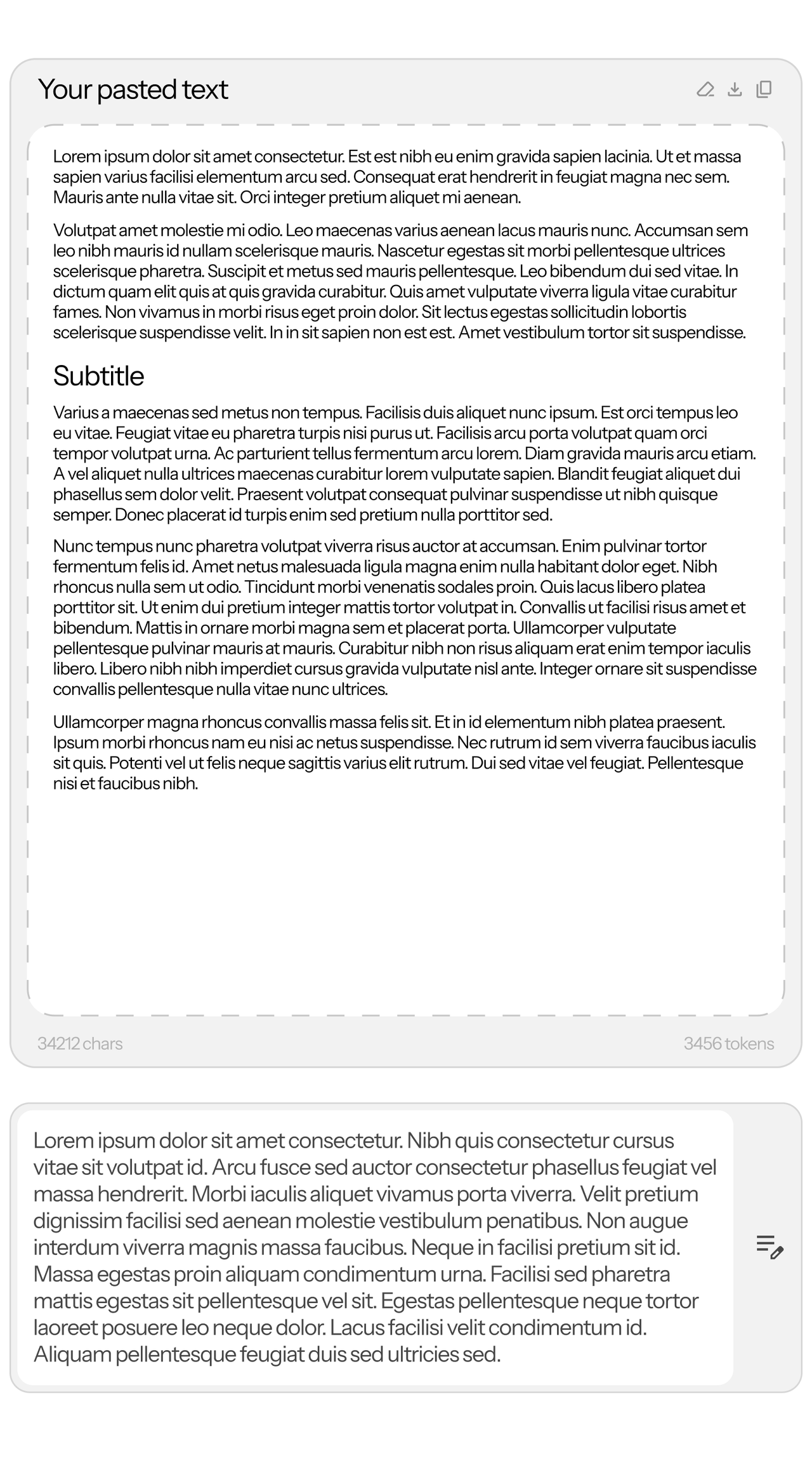

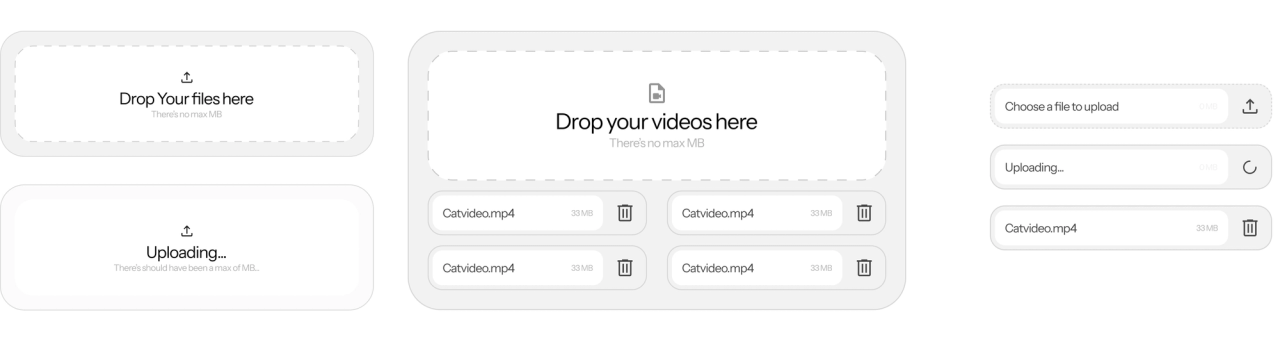

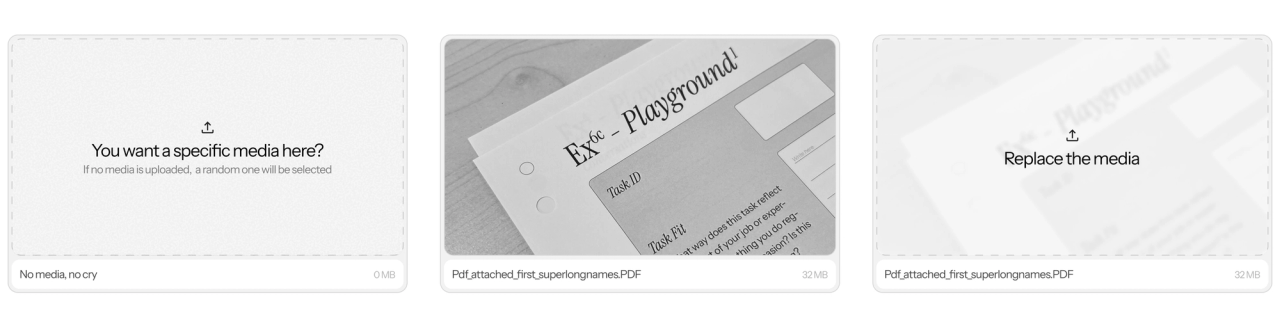

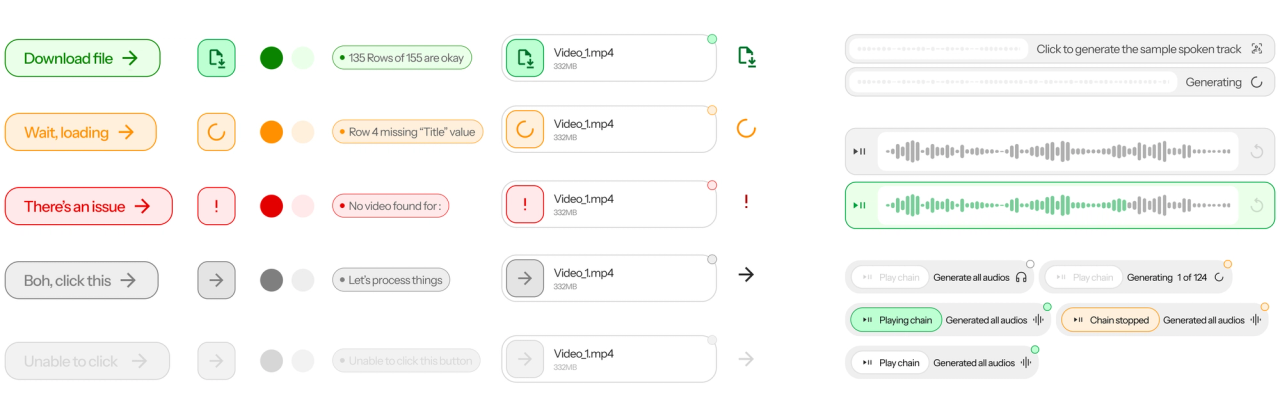

The toolkit is structured as a Svelte component library where each component is independently functional and designed for programmatic assembly. Components communicate through shared variable stores rather than hard-coded parent-child relationships, which means an LLM agent can wire them together by reasoning about data flow rather than component hierarchy. The library spans two categories. Functional components handle specific research tasks: audio transcription via WebGPU-accelerated Cohere ONNX, text extraction via local Tesseract, structured data processing, search and retrieval across document collections. System components — buttons, tokens, layout primitives, form elements — maintain visual and behavioral consistency across whatever interface the agent assembles. The system layer matters because the researcher shouldn't feel the tool was machine-generated: the interface must communicate trustworthiness, not technical novelty.

Overcoming CLI limitation

The command line presupposes three things that non-STEM research environments cannot take for granted: programming knowledge, familiarity with library ecosystems, and comfort inside an IDE. Even with LLM agents now capable of writing code from natural language, the ergonomic gap remains. A researcher who knows what they want to do with their data — transcribe interviews, cross-reference themes, analyze sentiment — still needs someone to translate intent into tool selection, dependency management, and execution. ORT collapses that gap. Instead of 'find the right Python library,' the agent says 'connect the transcription component to the search component.' The agent's job becomes orchestration, not invention.

Privacy first: WebGPU and local inference

The privacy requirement is non-negotiable. European social and anthropological research routinely handles personal data — interview recordings, participant records, sensitive field notes — that cannot legally leave the researcher's machine. GDPR compliance means processing must happen locally, which rules out every cloud-based AI service. ORT runs inference entirely on-device through WebGPU-accelerated ONNX models served via HuggingFace Transformers. The stack has been tested on Mac hardware with Mistral Mini for text generation, Cohere for audio transcription, and a WebGPU text-to-speech model. For tasks exceeding local capacity, the toolkit connects to self-hosted Ollama or Olmx instances. GPU acceleration makes transcription near-real-time. The choice of WebGPU means the entire toolkit runs as a static site with zero server load.

A ready-to-assemble library for LLMs

The components are built, tested, and functional. The system is early alpha — the core interaction loop works, the models run, the components connect. Since WebGPU handles all computation client-side, hosting requires only a static file server. A lab could fork the repository and deploy a private research infrastructure in under an hour. The toolkit is designed to be self-hosted from the start — no vendor lock-in, no usage quotas, no data leaving the premises. Local inference produces rougher results than cloud APIs, but for thematic coding, interview indexing, and document search, reliability and privacy outweigh marginal improvements in output polish.